Moltbook is not magic

Why do we crave hyperbole in AI?

ICYMI:

The AI Battle tearing MAGA apart: The Trump administration claims to be pro-AI. It’s not as simple as that.

A Third Way for AI and Geopolitics: Better leadership on AI is needed – it needn’t come only from the United States

TEDAI talk: What the Space Race can teach us about AI (delivered in Vienna in September, now available to view online)

When a politician is trying to avoid answering a question with no politically acceptable answer, they will often say: “Look, I’m not going to give a running commentary on [the Chancellor’s next budget / Reform’s poll numbers / etc]”

I worked in politics many moons ago and this always led us advisers to joke, “What have they all got against running commentaries?!”

Perhaps not the strongest stand-up material, but it made us laugh, and I was reminded of it today when sitting down to write this post.

Because my plan for this Substack is absolutely not to spend each post commentating on every topical AI madness out there, each flash-in-the-pan fad or scandal.

That said…

Things caught fire last weekend with OpenClaw/Moltbook – and the furore raises wider questions over how we talk about AI’s capabilities and how to manage them. So allow me to answer the question directly.

Beware the Squirrel

First, some quick context:

I am not a doomer, and never have been. I started working in AI because I was/am so excited about the potential.

However, I have spent the past 15 years of my career focused on what might be loosely explained as the unintended consequences of technology on the fabric of society.

So please trust me when I say that I do not take the downsides of AI lightly.

But these downsides, and the measures needed to mitigate them, are not best observed or analysed in a climate of fear and hyperbole.

This is why back in 2023 I wrote a piece for the popular and excellent Comment is Freed, forewarning those whose first experience of AI was ChatGPT to keep their cool.

Yes, I had then-Prime Minister Rishi Sunak primarily in mind. I thought that the focus of his ‘AI Safety Summit’ was misguided and panic-driven – serving more to freak people out about an existential threat from AI, of which there was little compelling evidence, than addressing the credible risks.

But my piece was also a word of caution to the industry itself which, post-ChatGPT, was suddenly flooded with hype and projection.

I wanted to put a line in the sand between the years’ worth of sociotechnical research into the risks and mitigations of AI, and the assumptions required to make the leap from there to ‘superintelligence’ that causes humans to be obsolete.

AI has progressed significantly in the three years since I wrote this piece – but re-reading it today, I think it is more relevant than ever.

The illusion of the singularity

“As soon as it works, no one calls it AI anymore” - John McCarthy

Over the weekend, a new craze swept ‘the old-people internet’ (you know - X, BlueSky, Instagram).

An open-source agentic assistant called OpenClaw (previously named Moltbot) had recently experienced a surge in popularity. Entrepreneur Matt Schlicht then launched Moltbook, a Reddit-esque ‘social network’ exclusively for OpenClaw agents to interact.

Screenshots of some of the more eyebrow-raising ‘discussions’ between those agents (including those seeming to start a new religion or plotting to unionise) then led some people to fear that the age of superintelligent AI that operates outside of human control was upon us.

A typical post went something like: “It’s over.” Elon Musk, never late to hop on a trend, said he believed Moltbook marks “the very early stages of the singularity.”

*

In truth, Moltbook is not proof of the emergence of superintelligence. These agents are designed to sound like humans, and the fact that they repeat all of our sci-fi projections is not a sign of human-like consciousness; it’s a sign that the model has ingested and can regurgitate all the fantastical stories humans have written over the years about robots taking over.

In fact, the security firm Wiz conducted research which suggested that the vast majority of agents were not autonomous at all, but a fleet of Mechanical Turks controlled by humans.

That doesn’t mean it’s not unnerving. And it doesn’t mean there aren’t lessons to be learned.

As experts have warned for a long time, there are many practical risks to agentic AI entering the mainstream. To get an inkling of the real-world implications of increasingly unpredictable outcomes of conversations between agents, with or without human involvement, it’s worth taking a moment to remember the Flash Crash of 2010, when an automated sell order interacted with a series of high-frequency trading algorithms to cause a 36-minute, trillion-dollar US stock market crash.

Imagine how much worse that could get if untested, increasingly capable agents were set loose on critical systems too soon.

Quite apart from it being a choice as to whether we do that or not, fortunately, when it comes to mitigating these concerns, we’re not starting from scratch. Away from the hype, experts have already been working to measure and address the risks of agentic AI. (A recent example is the excellent Center for Security and Emerging Technology’s recent paper exploring the rise of automated AI R&D.)

And this is one of the key points I made in my 2023 essay:

Seeing some of the things that AI can produce for the first time can be disconcerting. But that doesn’t mean that it is frightening or out of control.

Experts have been working to counter the adverse effects of AI for a long time. The reason that ChatGPT, when it launched, avoided racist or inflammatory outputs (like Microsoft’s ‘Tay’ chatbot before it) was because OpenAI’s safety and ethics team had responded to research about the biases present in AI systems and had innovated both technical protections and transparency solutions like Model Cards.

Human beings have always managed the risks of new technologies, and this time should be no different, as long as we have the resources, time, and incentives to put in the work.

So why is AI so often described in this hyperbolic way, even by those deeply involved with its deliberate design and development?

The AI safety movement

Moltbook came hot on the heels of Anthropic co-founder Dario Amodei’s recent essay in which he predicts that AI’s growing autonomous capabilities will soon test the resilience of our social and political systems.

There are a couple of reasons that technologists talk about AI in such apocalyptic terms:

They genuinely believe it. This is true of many prominent voices, including Dario, who deserve to be listened to. But their opinions should be put into perspective. AI scientists are immersed in their field, but they are not experts in operations, complex systems, or the messy realities that make AI implementation possible (see Geoff Hinton’s previous predictions about the imminent automation of radiologists).

It’s a marketing ploy. This can be a very cynical take, and is not aimed at the true believers above. However, there’s no doubt that some AI ‘influencers’ deal in dramatic language because it helps their business goals (who wants to miss out on investing in superintelligence?!) and places them firmly in the driving seat (“if I’m the only one who understands the potential of this technology, I should be the one to educate and decide its future”).

I do not doubt Dario’s motives – he was one of the scientists on the board of Partnership on AI, an organisation I co-founded in 2016 as a trusted space to discuss the future difficulties of AI - and has been passionate about safety for years. I recognise the desire to raise the alarm among scientists who genuinely want to build something useful, but fear their creation being used for ill.

But wrapping sensible policy recommendations in a layer of drama, and depicting AI as an independent, almost mystical force, does not help us reach good conclusions.

Not everything about AI has to be scary

Of course, just because so many people speak about AI in this way, that doesn’t mean it’s not true. But new technology has always brought winners and losers, opportunities and challenges.

In a future edition, I will share more on the specific things I worry about when it comes to AI - what I’ve termed the ‘3 S’s’: security, stupidity, and surveillance.

I think there is enough plausible evidence to suggest that new AI capabilities might be a threat to the cybersecurity of individuals, companies and countries; that an over-reliance on AI might numb our own powers of critical thinking; and that it is already being used to expand the reaches of both corporate and state surveillance in ways that reduce the vibrancy and freedom of human life.

But the history of transformative technologies suggests that AI will not be the one technology unique in thousands of years of human history that we aren’t able to adapt to.

Even if we can’t all agree on that, we can surely at least agree that an endless cycle of dramatic open letters, personal manifestoes, and alarmist statements is not going to be the best way to handle anything. That it engenders only either overreaction, or frozen apathy.

There is a saying from the American kids’ TV hero Mister Rogers, to help people cope with scary events: “Look for the helpers”.

If you are unnerved or worried about AI, allow me to offer a modified version: “Look for the doers.”

They are there, they are working on it, and you can work on it too if you wish. We should prepare, but let’s not panic.

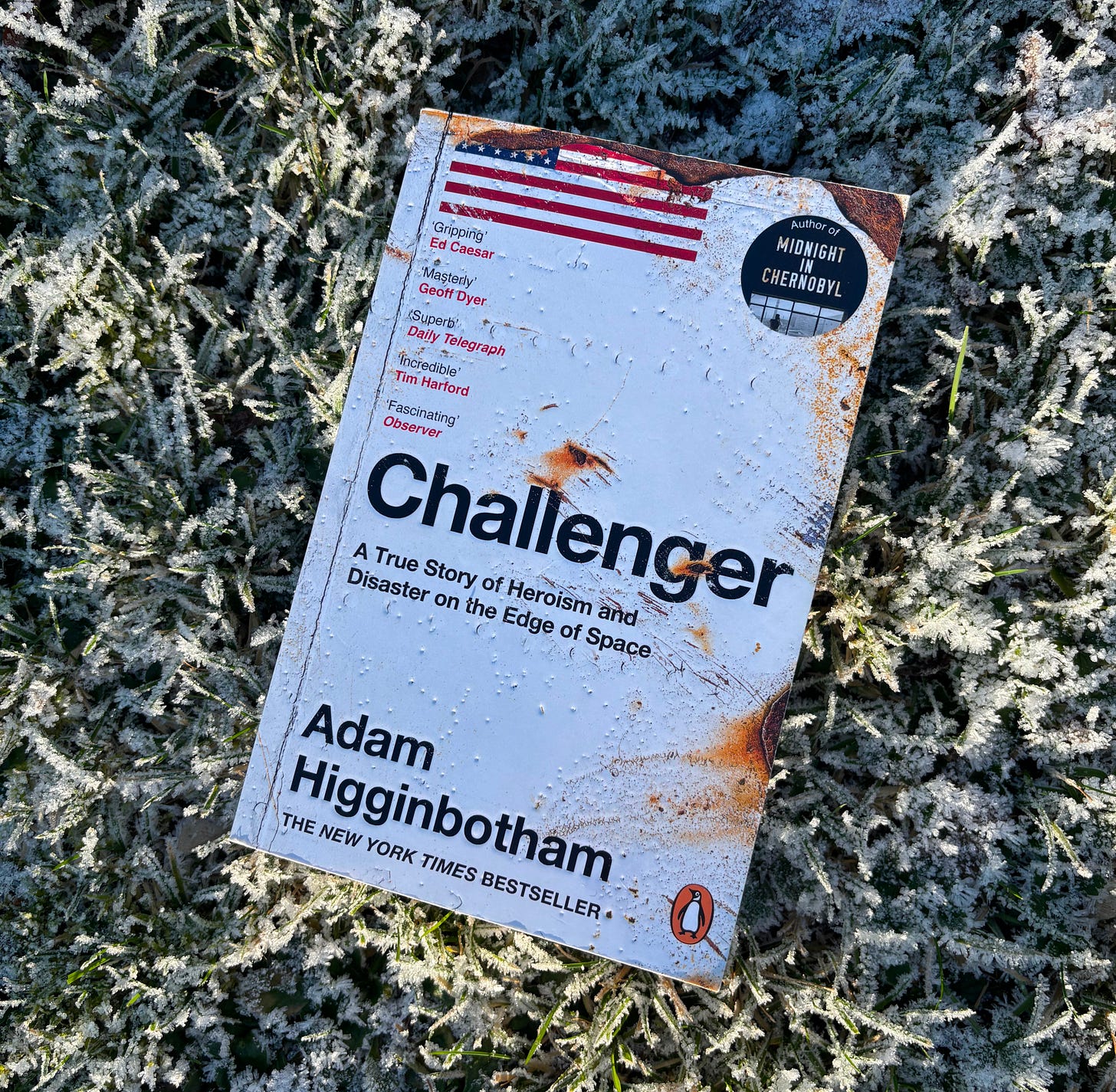

Book of the Week

I loved Higginbotham’s first book ‘Chernobyl’, a gripping look at the 1980s nuclear disaster. This follow-up, on the Challenger explosion, is another deeply reported and well-written tale of systematic, organisational and human failure that led to a unnecessary loss of life. If we’re going to think about how to avoid future AI disasters, we could do worse than read and heed the lessons of both of Higginbotham’s books.

Trust.

That is the metric that is increasingly going in the wrong direction. Moltbook doesn't help. But, this grounded response and your TEDx talk hit the right note.

In the spirit of your "What the Space Race can teach us about AI", let me share, "What Can the Roaring 20s Teach Us About AI":

https://substack.com/@fezubia/p-184251789

TL;DR: Governance is more than content regulation.

With leaders like yourself discouraging the doom and gloom narrative, and governance regimes that account for the full lifecycle of the digital environment, trust will begin to emerge.

Really solid framing. The distinction between geniune concern and marketing-driven drama is something I've been wrestling with too. Last month I was at a tech confrence where every other talk was predicting AGI next quarter, but nobody wanted to discuss mundane stuff like model drift or data governance. The look for the doers advice cuts through all that noise. Practical risk mitigation beats catastrophizing every time.